This debate about AI models and their providers did not start yesterday. That is why we defined model flexibility as a design principle of our AI-powered workflows early on. Which models we connect to the interfaces of our foresight and innovation processes in PEACOQ can be reassessed at any time - depending on what we and our clients consider appropriate.

Our free Scenario GPT was deliberately designed as a low-threshold entry point and was tied to a single provider as a format. For productive, platform-supported workflows, however, we rely on model flexibility, clear data structures, and traceable results within our data space. What exactly does that mean? Here is a brief overview.

The occasion is serious. And it does not only concern OpenAI. Across the entire tech industry, enormous sums are currently flowing into US politics: major donations to Super PACs, millions for inauguration ceremonies, close ties to the Trump administration. But with OpenAI, it strikes a particular nerve - because the company was originally founded as a nonprofit organization, with the stated goal of developing AI for the benefit of all humanity.

Von diesem Anspruch ist wenig übrig. OpenAI-Mitgründer Greg Brockman und seine Frau haben 25 Millionen Dollar an ein Trump-nahes Super PAC gespendet. CEO Sam Altman gab eine Million Dollar für Trumps Amtseinführung. Das Unternehmen arbeitet an Verträgen mit dem Pentagon, hat seine Selbstverpflichtung gegen Militärprojekte still zurückgenommen, und Berichte zeigen, dass die US-Einwanderungsbehörde ICE KI-Tools auf Basis von GPT-Modellen einsetzt. Die Umwandlung von einer Nonprofit-Organisation in ein gewinnorientiertes Unternehmen rückt diesen Wandel zusätzlich ins Licht.

To be clear: OpenAI is not an isolated case. Other tech giants - from Google to Amazon to Meta - are also investing heavily in political networks and government contracts. The question is therefore broader: which companies do we entrust with our AI infrastructure - and are we making that decision consciously?

For us at Schaltzeit, this was the impetus to translate a conviction we have held for some time even more consistently into our products and processes: model flexibility.

The Convenience Trap

When someone says “AI” today, most people think of ChatGPT. That is understandable - OpenAI created an impressively simple interface early on that gave millions of people access to generative AI. And the company continues to bet on exactly this: on habituation. On the silent assumption that there are no serious alternatives.

But this assumption is becoming more fragile by the month. The AI market has fundamentally changed. Models from Anthropic, Google, xAI, Meta, Mistral, or DeepSeek deliver comparable - or even better - results in many areas. What remains above all is: convenience. And the question of whether we want to be guided by it.

What We Observe Technically

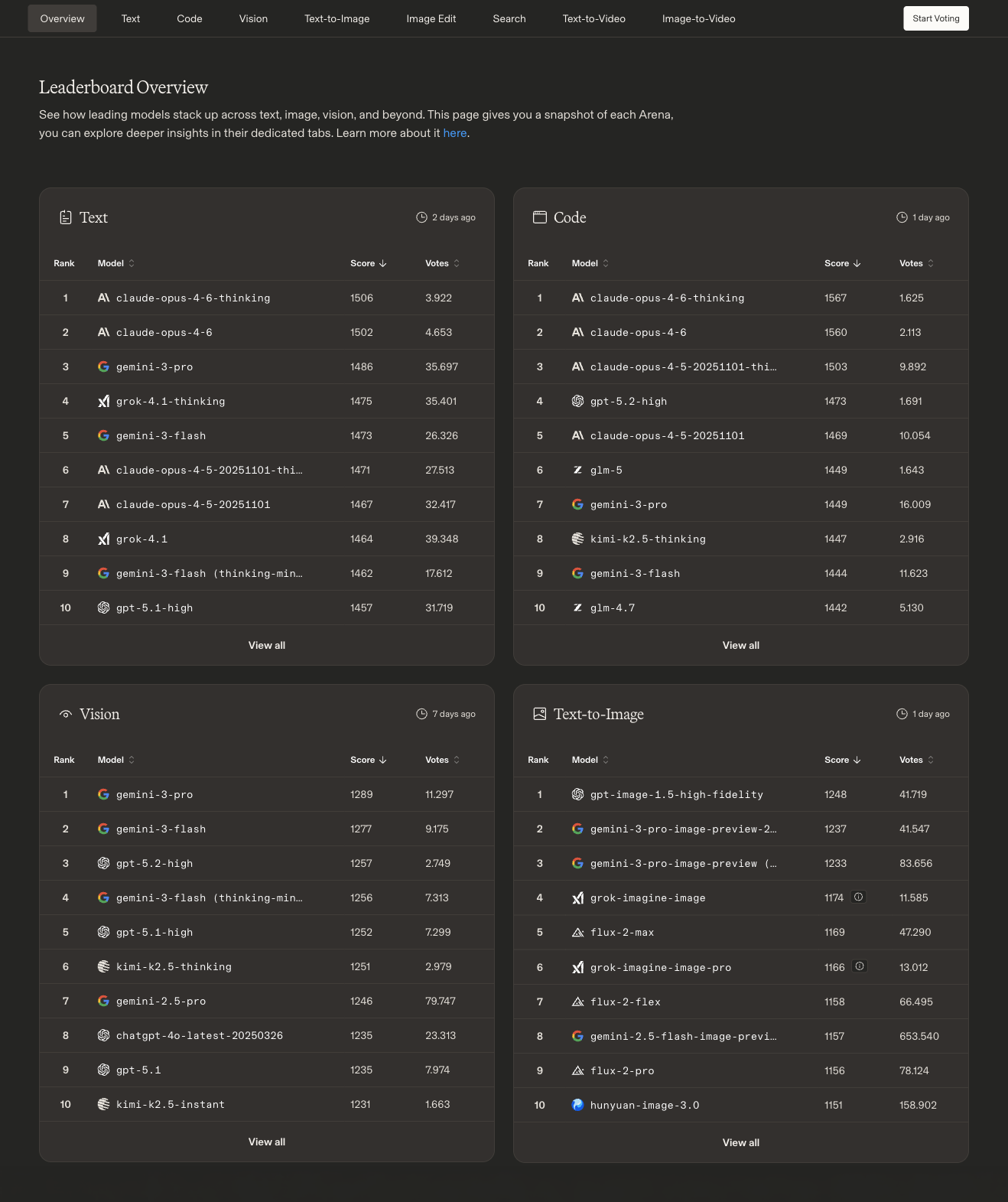

We continuously monitor the AI model landscape - not out of curiosity, but because it is crucial for our work and our products. The current benchmarks from LM Arena speak a clear language: no single provider dominates across all categories.

In the text domain, Anthropic’s Claude Opus 4.6 currently leads the rankings - closely followed by Google’s Gemini 3 Pro and xAI’s Grok 4.1. OpenAI’s current GPT-5.1 lands in 10th place. The gap between first and tenth place? Just three percent. In the code domain, the picture is similar: Claude Opus 4.6 leads, followed by its predecessor version. OpenAI’s GPT-5.2 reaches 4th place - on par with Chinese models like Kimi and GLM.

It gets particularly interesting with vision and search: Google’s Gemini 3 Pro dominates both visual tasks and web-based search. In image generation, OpenAI’s GPT-Image has a slight lead, closely followed by Gemini and Grok. And in the video space, Google Veo, Grok, and OpenAI’s Sora are in a tight race.

The message is clear: there is no longer “the one best model.” Depending on the task - whether text analysis, coding, image processing, or research - different providers lead. Anyone who ties themselves to a single one is consciously sacrificing performance.

Beyond pure model quality, there is another aspect that is often underestimated in daily work: tokens - the computational units in which AI models think. Every query, every response, every context consumes tokens. And this is precisely where providers differ significantly. Anyone who works intensively with Claude, for example, quickly reaches the usage limits on the Pro plan - sometimes in the middle of a work process. Upgrading to higher tiers costs up to 200 dollars per month. The situation at OpenAI is similar: anyone who uses AI tools for complex tasks like coding or data analysis burns through token budgets at a rapid pace.

The pricing models are far from transparent. Token costs vary depending on the model, context length, and type of use - and change regularly. For organizations that use AI as a fixed component of their workflows, this quickly becomes a planning uncertainty. Here too, open-source models offer a way out: anyone who works locally - for example with Ollama or comparable tools - has no rate limits, no monthly caps, and full control over costs.

Model flexibility therefore means not only being able to choose the qualitatively best model for a task. It also means not being dependent on the pricing structures and usage restrictions of a single provider.

When Tools Have an Attitude

The technical side alone would be reason enough to think about model diversity. But the political developments in the AI industry turn a technical consideration into a values-based decision.

This is not about putting individual companies in the pillory - the entanglements between tech corporations and politics are systemic in nature. It is about a fundamental question: when we rely on AI tools in our daily work, what assumptions do we make about the companies behind them? And what happens when the conditions change?

That OpenAI is particularly in focus has to do with the height from which it has fallen: a company that set out with the promise of developing AI for the good of humanity, and now finds itself in military contracts and major political donations - that is more than a strategic course change. It is a break with its own founding values.

In Europe, there is an additional dimension: digital sovereignty. Over 80 percent of digital infrastructure in the EU comes from providers outside Europe. Projects like Mistral AI in France or the German SOOFI project - an open-source language model funded with 20 million euros - are attempting to create counterweights. Things are moving - but the road is still long.

Model Flexibility at PEACOQ: Our Answer

All of these developments - technical, political, economic - have strengthened our resolve to consistently continue on a path we have long been pursuing: model flexibility as a design principle.

In our platform PEACOQ (peacoq.net), we are increasingly integrating AI features that we develop ourselves. Model flexibility is not a retrofit feature but a foundational architectural decision: we are building PEACOQ so that our clients can decide for themselves which language model they want to use for their use cases. We do not make that choice - the people working with the platform do.

Concretely, this means: whether a team prefers to work with Claude, Gemini, an open-source model like Llama, or a locally hosted solution - PEACOQ should enable this freedom of choice. Because we believe that organizations looking to envision and shape futures and innovations through AI should not become dependent on a single provider.

In our foresight processes and workshop formats, we also rely on this diversity. Next week, for example, we will be visiting the Bundeswehr Planning Office as part of a thematic workshop on “AI in Strategic Foresight.” There too, the question of how to deal with dependencies on individual AI providers will be relevant - especially in sensitive application contexts.

Model flexibility is more than a technical feature for us. It is an expression of a fundamental principle: anyone who wants to consciously shape futures and innovation must also be able to consciously choose the tools they use.

What If…?

From futures research, we know it is important to make assumptions visible. So we ask: what assumptions actually underlie our AI usage?

“OpenAI will always have the best model” - an assumption clearly refuted by current benchmarks. “One provider is enough” - an assumption that ignores technical and political risks. “Ethics are secondary in technology decisions” - an assumption we do not share.

Various scenarios are conceivable: a world in which a single company dominates the AI market and thereby also influences which questions are asked and which perspectives become visible. Or a world in which diversity of models also enables diversity of perspectives - in which organizations consciously decide whom they entrust with their data, their processes, and their values.

We prefer to work on the second scenario.

💬 How Do You See It?

How do you deal with the question of which AI models you use - and why? Is model choice a purely technical decision for you? Or do values, sovereignty, and independence also play a role?

We look forward to the exchange - write to us by email, on LinkedIn, or directly in conversation.